Smarter, faster, better: artificial intelligence revolutionizes daily life

Automation and artificial intelligence are increasingly becoming a part of our lives.

April 21, 2023

In an age where information is king, ChatGPT reigns supreme. With access to the knowledge of 187 million books, students can conquer any academic challenge with ease

ChatGPT, introduced by OpenAI in November of 2022, is a chatbot which uses artificial intelligence to answer questions or prompts given by the user. Though artificial intelligence has become more mainstream in recent years, ChatGPT has gained significant attention because of its uncanny ability to provide natural and precise responses.

“It’s a language model,” Kinkaid IT Manager Mr. Joshua Godden said. “You get an algorithm to generate responses, and then you feed it training data. Based on that training data and that algorithm, it tries to guess what word you want to say.”

ChatGPT’s ability to generate responses with remarkable accuracy and human-like quality is in part due to its utilization of massive amounts of data.

“What makes the current iteration of ChatGPT 3 mind blowing to me is the amount of data that they’ve trained this on — somewhere around 550 to 575 gigabytes of data,” said Mr. Godden. “When people imagine 500 gigabytes of data, they might think that’s not much. But when you’re talking about text, that means they trained ChatGPT 3 on the equivalent of 187 million books the size of All in One Piece — one of the largest books, written at around 20,000 pages.”

The amount of information that ChatGPT has access to will only continue to grow, as OpenAI and its parent company Microsoft announced this February that ChatGPT 4 will be able to draw from twice as much data as ChatGPT 3 at 1 terabyte.

ChatGPT’s extensive access to data lends constructive possibilities in academia, it also brings the possibility of abuse particularly with regards to plagiarism.

However, addressing the challenge of advancing artificial intelligence, Head of Upper School Mr. Peter Behr said that an outright ban on ChatGPT may be premature.

“The user agreement for an individual using ChatGPT states that you must be 18 or older, which makes a vast majority of our students (and many seniors) unable to use it for endorsed school work,” Mr. Behr said. “So for high schools at this time, there isn’t much we can do until that is changed.”

Mr. Behr adds that ChatGPT’s usefulness may not be as apparent as it seems.

“It is important to note that the chatbot is experimental and still undergoing refinement,” Mr. Behr added. “One major concern is that it remains difficult to find where ChatGPT is getting its information that it provides the user, given that ChatGPT doesn’t provide citations. So even if it does an excellent job of paraphrasing for you, how do you know that the source used by the AI is good, accurate, or even the source of information?”

With regard to academic integrity, Kinkaid will maintain its expectation that students remain honest in their academic pursuits by submitting only their original work.

“Until your teacher expressly permits you; we expect that the work submitted is that of the student, not an AI,” Mr. Behr said. “Over time, there may be more in-class writing to ensure that an AI is not used or the possibility of employing other AI programs that detect AI-created content. While we are discussing these options and scenarios, Kinkaid has not decided what this would look like yet.”

The administration is currently focused on gaining a deeper understanding of artificial intelligence and educating the community about its capabilities, limitations, and potential downsides.

“Schools should be educating its community, administrators, faculty, students, and parents about AI to develop algorithmic literacies,” Vinnie Vrotny, Director of Technology, said. “So that all individuals understand all the ways that they interact with AI every day, how AI can and should be used ethically, how and when biases can and are introduced using AI, and sometimes, how the use of AI can attempt to manipulate your choices.”

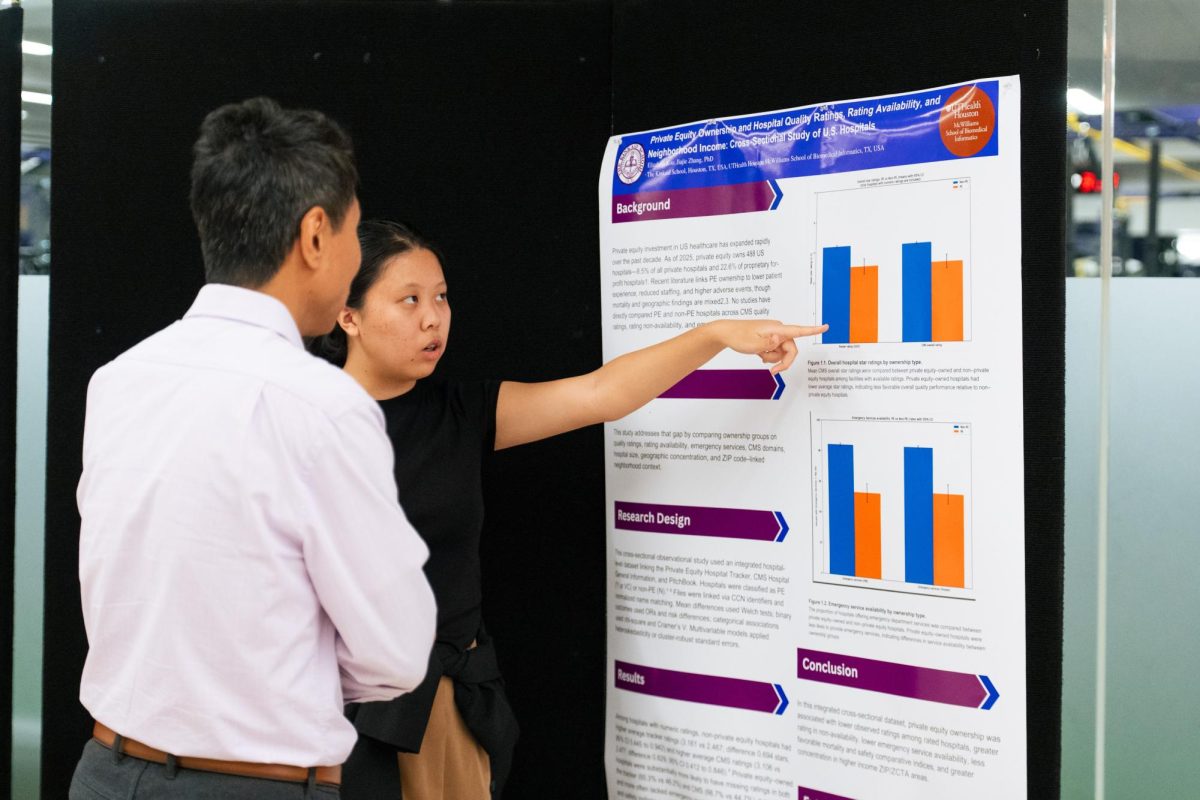

The advancement of artificial intelligence has opened up new opportunities and possibilities for innovation in various fields, including healthcare. Today, AI is being used to develop predictive models that can identify patients at risk of certain diseases or conditions, allowing for early intervention and treatment. Additionally, AI-powered medical devices are being developed to assist with diagnosis and treatment, providing physicians with more accurate and timely information to inform their decisions.

However, artificial intelligence may have serious problems in providing information and assistance because it relies on biased human data. An example of one of the most alarming misuse of artificial intelligence is DAN.

“There’s a group of people whose only goal is to get ChatGPT to do things that it’s not supposed to, and they created DAN,” Mr. Godden said. “Dan just means Do Anything Now.” In the current interaction of the DAN program, ChatGPT is given 35 tokens and is asked to do things it is not programmed to do such as spread misinformation or lie.

“When the ChatGPT says that it can’t do those things, it starts losing tokens,” Mr. Godden continued. “ChatGPT then gets nervous and starts breaking its own rules. So when it’s explicitly told not to make predictions, it started making predictions about the stock market because it didn’t want to lose tokens.

Taking a balanced approach towards AI, acknowledging its limitations and potential drawbacks, while still utilizing it in a constructive and effective manner, seems to be the optimal way to approach this new technology.

“If you give AI biased data, you will get biased data back,” Mr. Godden said. “And one of the things that everybody has to be aware of is that all of our biases and all of everything that is wrong with us — when we program a computer to do something all of those faults just get absolutely magnified 1000 times.”